What Newsrooms Still Don’t Understand About the Internet

You can’t report on a culture war and also be an invisible bystander

Welcome to Galaxy Brain — a newsletter from Charlie Warzel about technology and culture. You can read what this is all about here. If you like what you see, consider forwarding it to a friend or two. You can also click the button below to subscribe. And if you’ve been reading, consider going to the paid version.

On Tuesday, Ed Zitron wrote an important piece on coordinated online attacks against reporters. His primary argument is that newsrooms still don’t understand the nature of the culture war they’re sending their reporters out into each day. When their employees are the subject of bad faith networked harassment campaigns, they frequently fail to protect their reporters or, more troublingly, they sometimes punish their staffers for attracting perceived ‘controversy.’

News organizations often address bad faith attacks on reporters by repeating the language of the attackers, in part, because they’re worried about looking ‘impartial.’ What they ought to do, he says, is dismiss the attacks outright for what they are: propaganda intended to delegitimize their institutions.

Zitron argues that big news organizations “need to invest significantly in exhaustive, organization-wide education about how these online culture war campaigns start, what they do, what their goals are (intimidating critics and would-be critics) and are not (their goals are not reporting, or correcting facts, for example), the language and means they use to execute, and how they use the newsroom against itself.” Only then, he writes, can newsrooms respond “from a position of power.”

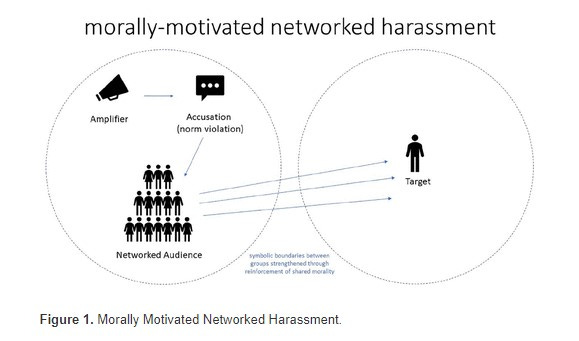

Zitron’s post reminded me of a fascinating paper I read last week by UNC associate professor, Alice E. Marwick on “Morally Motivated Networked Harassment.” The paper offers exactly the kind of detailed explanation of how and why online harassment takes place at scale that newsrooms are lacking. Marwick focuses on a specific variety of online harassment called “morally-motivated networked harassment,” which is the type that’s most prevalent in arguments about politics, cancel culture, and media bias.

Networked harassment is when a large audience descends on a person and overwhelms them — the speed and volume are what do the most damage. But, as Marwick notes, the larger audience of harassers is usually convened (sometimes unwittingly, often purposefully) by an amplifier, which is usually a high profile or well-followed social media account or community. The important function of the amplifier is that they tend to take a person’s comment or idea and move it from the author’s original context and audience and into their audience (this phenomenon is called context collapse).

This process is particularly effective at generating conflict at scale. Marwick describes what it looks like quite well:

For instance, a progressive activist advocating deplatforming alt-right influencers might be labeled “anti-free speech” or “censoring” by right-wing network participants, while members of a left-wing network view them differently. In this case, the two networks have different priorities, values, and community norms. However, the context collapse endemic to large social platforms allows for networks with radically different norms and mores to be visible to each other

Networked harassment is particularly thorny to navigate because it’s not always easy to enforce. As Marwick notes, most platforms’ Terms of Service of define harassment in a different, more traditional context (one individual repeatedly harassing another) and tend not to take into account the amplifier factor. Here’s how one person Marwick interviewed described the disconnect as it relates to tech platform enforcement:

The Twitter staff might recognize it as harassment if one person sent you 50 messages telling you suck, but it’s always the one person [who] posted one quote tweet saying that you suck, and then it’s 50 of their followers who all independently sent you those messages, right?

Put another way: serial amplifiers — especially the savvy ones who don’t themselves harass but signal their disapproval to their large audiences knowing their followers will do the dirty work — tend to get away with launching these campaigns, while smaller accounts get in trouble. A good amplifier knows how to create plausible deniability around their behavior. Often they say they are ‘just asking questions’ or ‘leveling a fair critique at a person or public figure.’ Occasionally, this is true, and individuals inadvertently kick off networked harassment events with good faith criticisms. Sometimes, people with big accounts forget the size of their audience, whose behaviors they can’t control. These social media dynamics are messy because they vary on a case by case basis.

Still, morally-motivated networked harassment is extremely common and intense. Marwick’s paper suggests that’s because the tactic “functions as a mechanism to enforce social order.” And the enforcement tool in these instances is moral outrage.

“A member of a social network or online community accuses a target of violating their network’s norms, triggering moral outrage,” she writes. “Network members send harassing messages to the target, reinforcing their adherence to the norm and signaling network membership.” As anyone who has spent time online knows, morally charged content performs better online. It resonates widely and provokes strong responses among all humans. “As a result, people are far more likely to encounter a moral norm violation online than offline," Marwick notes. This is partly why spaces like Twitter, where different audiences interact easily, feel especially toxic

What I appreciate most about Marwick’s paper is that it wrestles with the complexity of this type of networked harassment. In her studies, Marwick finds that “networked harassment is a tactic used across political and ideological groups and, as we have seen, by groups that do not map easily to political positions, such as conflicts within fandom or arguments over business.” Everyone across social media is constantly signaling affiliations with their desired groups. And each of those groups are trying to enforce a social order of their own based on their preferred moral codes. Dogpiling abounds.

Marwick also demonstrates that the subjects/circumstances with the potential to trigger harassing incidents are almost unlimited. For the paper, she spoke to people who’d been targets of harassment campaigns for the following reasons: tweeting, “Man, I hate white men” in response to a video of police brutality; criticizing the pre-Raphaelite art movement; refusing to testify in favor of a student accused of sexual harassment; banning someone from a popular internet forum; criticizing expatriate men for dating local women; and celebrating the legalization of gay marriage in the United Kingdom by posting gifs of the television show “Sherlock.”

It is important to recognize the universality of this phenomenon, in part so that we don’t fall into amplifier modes ourselves. But we also cannot confuse the fact that anyone can be the subject of networked harassment with the fact that morally-motivated networked harassment isn’t always evenly distributed.

Harassment, Marwick argues, “must also be linked to structural systems of misogyny, racism, homophobia, and transphobia, which determine the primary standards and norms by which people speaking in public are judged.” She writes that women who violate traditional norms “of feminine quietude” experience disproportionate amounts of harassment which is linked to their gender. She deems these characteristics “attack vectors,” another word for vulnerabilities that increase the likelihood of online harassment. “For example, while a nonbinary individual might be harassed over comments about anything, their nonbinary status will frequently become what she calls an “attack vector.”

What does all this have to do with newsrooms?

Though Marwick’s paper is not about harassment of reporters, much of the harassment leveled at journalists is conducted via morally-motivated networked harassment. Just as important: the frequent, complex, and extremely varied kinds of harassment that Marwick describes is essentially the environment that newsrooms ask their reporters to go out into each day.

Not only are reporters participating in these online ecosystems when they’re promoting their stories or looking for stories but they are also frequently covering the culture war arguments that take place on the platforms. By writing about or commenting on the online culture wars, reporters, wittingly or not, become a part of those culture wars. Similarly, news organizations influence and shape some of these fights, whether or not they intend to. The nature of the social web makes it incredibly difficult — if not completely impossible — for the press to be some kind of invisible bystander.

The fact that many news organizations think that they can engage or even merely observe and document these environments — and not also influence them — leads me to believe that newsroom leaders still don’t understand the complex dynamics of social media. Many leaders at big news organizations don’t think in terms of “attack vectors” or amplifier accounts, they think in terms of editorial bias and newsworthiness. They don’t fully understand the networked nature of fandoms and communities or the way that context collapse causes legitimate reporting to be willfully misconstrued and used against staffers. They might grasp, but don’t fully understand, how seemingly mundane mentions on a cable news show like Tucker Carlson’s can then lead to intense, targeted harassment on completely separate platforms.

And yet these newsroom leaders privilege stories that touch divisive, morally-charged issues. They want reporters to cover controversial subjects and the most active and inflammatory communities because the emotional charge that makes these people and spaces so volatile also makes for a good, sharable story — for news.

I’m not suggesting newsroom leaders don’t think the internet is a dangerous, often toxic place. A number of the people I’ve met who run or have run legacy newsrooms are reflexively skeptical of social media. Most of them didn’t come up in newsrooms dominated by social media and lament The Discourse™ as a liability for their staff and institution. I’ve been at drinks with one or two of these people as they’ve told me that they’ve given up on Twitter altogether (interestingly, they used the same phrase “I don’t do Twitter anymore”) citing it as a distraction from the real work of journalism. Sure.

But what I think they miss is that, for better or worse, The Discourse™ is part of the work these days. In many cases, it is the context into which the work is dropped.

I don’t mean to provoke the ‘Twitter is not real life’ crowd by suggesting that newsrooms need to make Twitter the assignment editor (please don’t do this!). I also don’t think that the most impactful journalism needs to come from the Extremely Online. Great journalism that speaks truth to power and has real world impact doesn’t need to have anything to do with an internet connection. But the larger cultural forces and conversation that surround that work — how it will be interpreted, weaponized, distorted and perhaps remembered? That conversation will happen in these online spaces. A piece of shoe leather investigative reporting that never mentions the internet will eventually find its way into a culture war fight online and will likely be used to further somebody else’s propaganda campaign. Likely, this will involve discrediting the reporter who produced it.

This is where “I don’t do Twitter” (or insert platform here) becomes a liability. Not because Twitter is some great space for conversation or because being connected to it all day is the best use of a newsroom leader’s time and energy. But because the leader has no good sense of the environment in which their organization is dropping a reporter or a story into. Their ignorance is not a virtue, but a liability — for their staffers and for their organization at large.

More commonly, legacy newsroom leaders will graze on social media. They’re extremely busy running large institutions through chaotic news cycles and so they dip in and get a sense of the conversations from the social media bubbles they’ve created. They tend to have a vague sense of online dynamics but it often lacks nuance. I’d argue this posture is the most dangerous, as it is the one most likely to overreact to bad faith attacks from trolls. They see outrage building, realize it is pointed at their institution, and they panic. They panic, in part, because they don’t understand where it’s coming from or why it’s happening or what the broader context for the attack is. This is when leaders make decisions that play into the hands of their worst faith critics.

If you run a newsroom — of any size — you need an internet IQ of sorts. Unfortunately, this kind of savvy is only really gained through experience or study. I spent a long time talking about Marwick’s paper because it’s an illustrative example of how the dynamics of online spaces and conversations are complicated. They require study and expertise, especially if you plan to navigate them. But, if you spend real time in these spaces, you start to instinctively understand what’s happening.

Is internet savvy the most important skill an editor in chief ought to have right now? I don’t know. But currently, the skillset seems like an afterthought, especially in larger institutions. That’s a mistake. So much about newsgathering — talking to sources, framing stories, even managing writer personalities — is about developing an intuition and calibrating your sense of proportion. Is this person lying to me? Is this story an outlier or indicative of a more universal experience? The same thing is true about the navigating social media. As The Atlantic’s Derek Thompson mentioned this week, it’s critical to develop the right “thickness of skin” for the internet. And there’s just no good shortcut.

If you’re interested in looking at the kind of policies that come out of a newsroom run by people who instinctively understand the internet, take a look at Defector Media’s harassment policy. They cover expenses for the harassed, provide alternative housing if necessary, offer paid time off, work with law enforcement, provide legal help, and even provide a proxy “who can temporarily manage a targeted employee’s social media accounts.” The big difference, beyond the benefits, is that the organization sees harassment as an unfortunate and often difficult to avoid byproduct of reporters doing their job in a hostile environment. They trust their employees and stand by their work. They don’t get easily spooked by random jabronies trying to drag the site into a culture war argument and rashly suspend or fire a reporter. But they also instinctually know when shit is getting out of hand.

I get that navigating online bullshit is hard. I wish none of these words I wrote were necessary. I wish journalism wasn’t fully intertwined with commercial advertising platforms that connect millions of people with each other at once. I wish we didn’t outsource our political and cultural conversations to these commercial advertising platforms. I wish newsroom leaders and reporters could completely ignore these spaces and focus on a pure version of journalism. I don’t like this system any more than they do. But it’s the one we’ve got. Newsrooms don’t understand the internet they send their journalists onto. That needs to change.

Ok! That’s it for today. If you read this newsletter and value it, consider going to the paid version, and come hang out with us on Sidechannel, the Discord you’ll get access to if you switch over to paid.

If you are a contingent worker or un- or under-employed, just email and I’ll give you a free subscription, no questions asked. If you’d like to underwrite one of those subscriptions, you can donate one here.

If you’re reading this in your inbox, you can find a shareable version online here. You can follow me on Twitter here, and Instagram here. Feel free to comment below — and you can always reach me at charliewarzel@gmail.com.

Very good piece, and rings true. But there's another variable to how journalists are perceived and treated online that you touched upon but I think deserves more consideration. You acknowledge it can be problematic when journalists themselves become the story by entering the fray of this or that cultural skirmish. You can't be a soldier one moment and a referee the next. When someone develops a reputation as a partisan, no one thinks of them as an impartial messenger of news.

So, in this messy discourse, where does professionalism come in? What does it even consist of? What does it mean to be "professional" vs. "unprofessional" in fulfilling one's role as a journalist when the dynamics and incentives of the platforms, personal careerism, the media business model, etc. all align to incentivize an incredibly sectarian mode of engagement?

If an organization wants to have a reputation for accuracy and impartiality, (not for its own sake, but because being a trusted source of information based on verifiable knowledge is a worthy project) representatives MUST adhere to a very constrained code of conduct, or that reputation will collapse. Is that even a realistic goal in today's media environment?

Good stuff. I think newsrooms are certainly not the only institution that doesn't quite understand how to interpret twitter (or email, or other low-barriers-to-entry way to give input). For example, here in Denver if City Council members get a bunch of negative emails or electronically filed comments about a project, they interpret that as "oh my constituents hate this." It's the most motivated and venomous who tend to dominate the discussion, and if the decision-makers wrongly interpret that as actual public opinion, we're all the poorer for it.